Books & Culture

What It’s Like to Stand Inside a Poem

A digital storytelling experiment turns poetry into immersive art

I can’t resist a cryptic invitation. So last month, when I got an email about a “weird interactive storytelling digital art experiment,” I was there for it.

The email came from my friend Max Neely-Cohen, a skater-turned-novelist who I’ve long suspected moonlights as a spy due to his lengthy, unexplained disappearances from New York. “Some brilliant nerds are going to help me to make a space that visually responds to poetry and prose as it is read aloud,” he wrote. “Imagine giving a reading somewhere and having the environment change based on what you read. And being able to control those changes.”

I could not, in fact, imagine that, so I said I’d stop by.

The project formed as part of a week-long micro-residency at CultureHub, an art and technology center founded in partnership with New York’s La MaMa Experimental Theatre Club and the Seoul Institute of the Arts in Korea.

I visited the space in NoHo on a humid Friday, riding a rickety elevator into a large, black-walled studio. Several metal chairs lined one side of the room, facing a wide screen on the opposite wall. Toward the front, Max’s assistant, NYU ITP student Oren Shoham, manned a laptop surrounded by wires; toward the back, a microphone stood next to a table stacked with books.

Combing through the books, I found This Planet is Doomed, a collection of science fiction poetry by Sun Ra, the legendary Afrofuturist jazz musician (and composer, bandleader, poet, philosopher, and and and). Because my dad is a jazz guy and I was a kid who identified as an alien, I was introduced to Sun Ra at a young age. Even then, I recognized that Sun Ra was, if not the coolest person to have ever lived, definitely in the top five. His science fiction poetry seemed the perfect input for a spatially overwhelming poetry synthesizer.

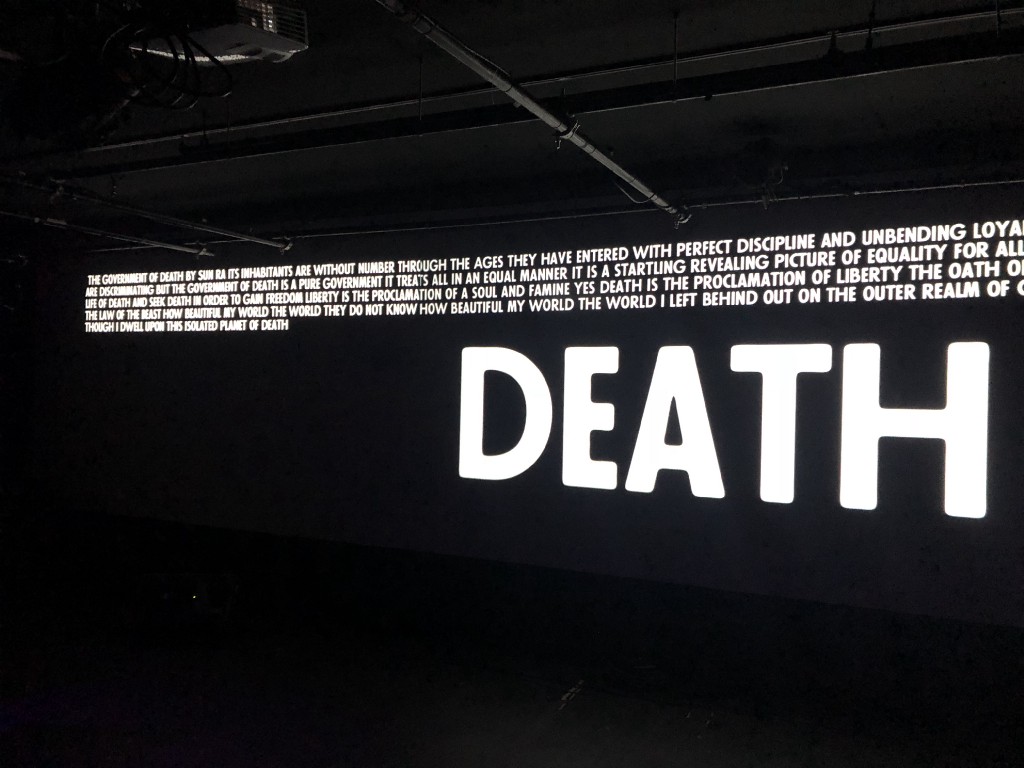

I picked two poems: “The Government of Death” and “Planet of Death,” and stood behind the microphone with two cameras trained on my face. The room went dark, Sun Ra spotlighted. As I read, words flashed in my peripheral vision, though I couldn’t fully see the adjacent imagery.

As I read, words flashed in my peripheral vision, though I couldn’t fully see the adjacent imagery.

“[A]ll governments / on earth / set up by men / are discriminating / but the government of death is a / pure government,” writes Sun Ra. “I gave up my life and am here on / this planet / of death / in order to teach my enemies that their / life is nothing else / but death / and that their planet was isolated from / the cosmic spheres / whence I gave up my life.”

Including titles, “death” is repeated twenty-one times in the poems. After finishing, I saw “DEATH” in huge, all caps letters on a black screen. I was briefly speechless, then noted that the whole thing was Incredibly Goth.

Max Neely-Cohen says he’s long harbored the idea for this kind of project, but wasn’t sure existing technology could manage what he had in mind. “There are all these visuals that work off of different parameters of live music,” he briefed me over the phone, after my visit. “A lot of them are just volume, but more sophisticated ones can analyze pitch and all these different things. They create a visual space out of that. I wondered, can you do that with a reading? For a really long time, the answer I got was ‘no.’ And the reason is that speech-to-text sucks for live transcription. But it’s been getting better.”

Max and Oren used a speech-to-text API (application programming interface) from Google Cloud and hooked it up to EmoLex, a database compiled by computer scientist Saif Mohammad, that crowdsourced associations between words, emotions, and sentiments; this included color association. When I read “Government of Death” into the microphone, my audio went to Google Cloud for transcription, then into EmoLex for visualization, and then zapped a giant, gloomy DEATH screen back to the studio.

Writer Moira Donegan, who read a piece about a black and white film, had a particularly poignant experience with project’s chromatic element. “Seeing those words rendered in color — rendered as color — added a series of associations to the work that I hadn’t had before,” she said. “It was pretty stunning to see them rendered that way as I was speaking — ‘grief’ as green, ‘body’ as orange — whereas my experience of the material before had all been in greys.”

It was pretty stunning to see the words rendered that way as I was speaking — “grief” as green, “body” as orange — whereas my experience of the material before had all been in greys.

Other testers I spoke with responded to different aspects of the installation — perhaps dependent on what they were reading, or their professional backgrounds.

Bloomsbury editor Ben Hyman read a selection of Frank O’Hara poems, and noted that “O’Hara’s work is intricately linked to his particular social world of friends and collaborators, and to the contemporary art of his time — in addition to being a poet, he was a curator of painting and sculpture at MoMA. It felt like he’d be the perfect ancestor to introduce to Max’s clever machine. I think Frank would have gotten a kick out of it.”

“The exhibit felt like ekphrasis in practice,” said writer Becca Schuh. “Creating a new form of art via commentary on already existing works. It was an odd day in New York, humid and sad (Anthony Bourdain died the morning I went to the project), and it was both surreal and beneficial to step away from the oppressive air and into this new atmosphere.”

How to Write a 20,000-Square-Foot Book

Writer and editor Bourree Lam took a different route, and read a series of texts to her husband. “I write about economics, and originally I had planned to read something very mundane like a jobs report or Federal Reserve meeting,” she said, “but then when it came time I didn’t want to read something with so many numbers…I ended up reading an exchange with my husband that pretty much sums up our communication ritual every evening since we got married: When are you coming home? Is work crazy? Are you coming home for dinner? Who’s in charge of dinner? What’s the plan?…Standing in those texts, I felt like I was sharing a part of my relationship with the world. We literally have this exchange every weeknight. It’s really personal, but also really mundane. It was that banal/sublime tension of art that draws from the quotidian (not that I’m calling what I read ‘art’!)…seeing the texts on the big screen made me realize I don’t mind sharing some parts of my relationship.”

Standing in those texts, I felt like I was sharing a part of my relationship with the world.

Meghann Plunkett, a coder as well as a writer, was perhaps in a unique position to appreciate the project’s technical elements. “I was so thrilled to see that someone was using APIs for art’s sake,” she told me in an email. “Often we see technology utilized to solve problems and disrupt markets. My heart soared to see that Max was using an API to embellish an experience instead of trying to change that experience. I loved that the speech-to-text feature was coupled with an author’s reading without overshadowing it. With innovation like this, it opens up the possibility for other artists to view open source APIs as small platforms for literature, art and performance. It gives me hope that technology and art can co-exist in a symbiotic, balanced relationship.”

Max is returning for part two of the residency in the fall, and emphasized how much more is possible: “We could use the same dictionary database, or a different one, and control all sorts of parameters. We could use a reading to grow a garden, or build a city. This can get more sophisticated, more visually interesting. This was a super-fast initial prototype. All we did was make it work. The amount we can do past that is unbelievable.”